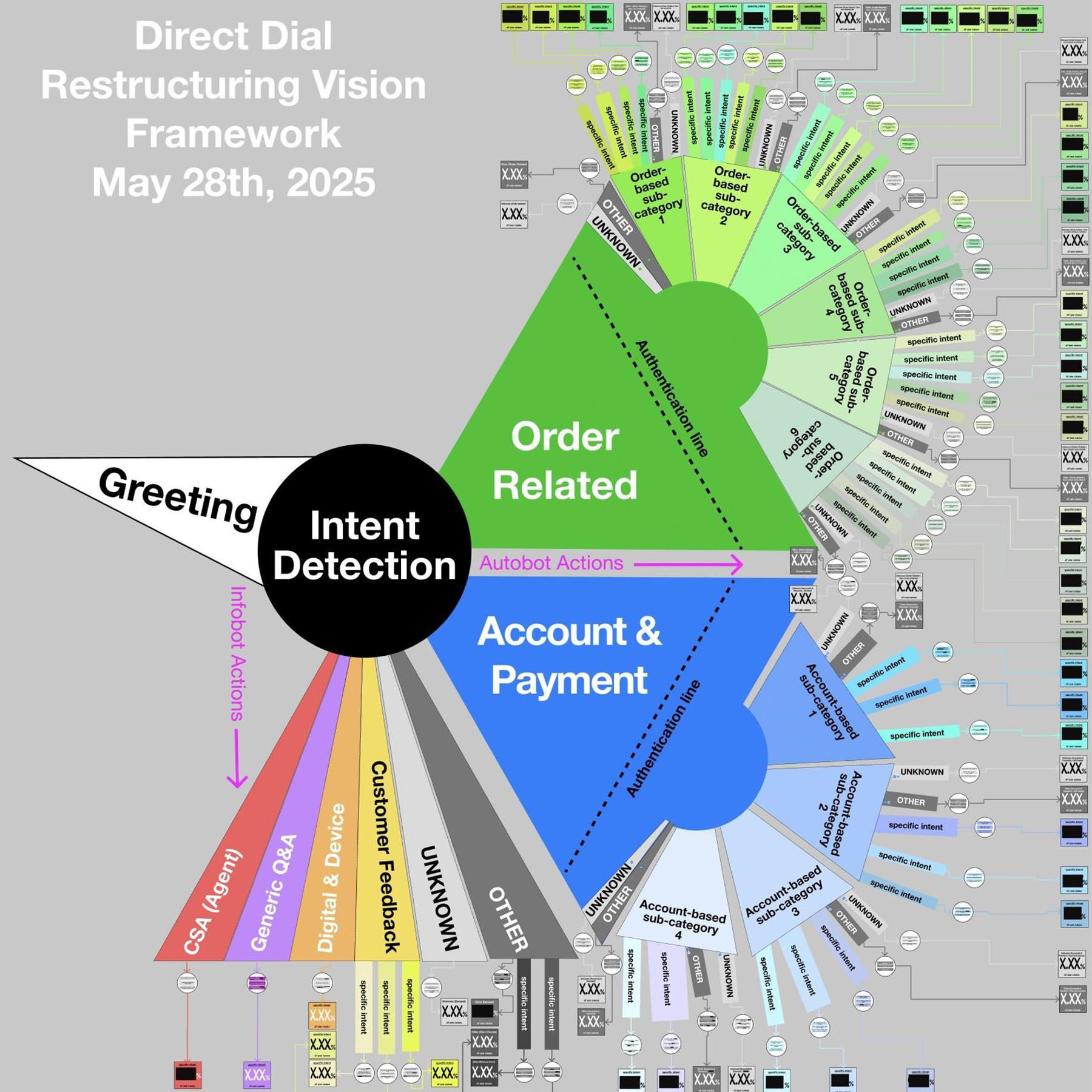

Amazon Customer Service authentication and the striated user flow I designed to describe the workflow processes. May 2025.

CONTEXT

Customers were hanging up before we could even ask why they were calling.

When I joined Amazon Customer Service, the IVR system had a structural problem that no amount of copy optimization could fix on its own: customers had to navigate more than two minutes of procedural steps (greetings, authentication, item selection) before they could say a single word about why they were calling.

That two-minute barrier created a 92% drop-off rate.

Most of the customers were hanging up before they ever got to explain their problem. And authentication, which was necessary to connect the call to an Amazon account, was the single biggest hurdle in that gauntlet. It featured an involuntary modal transition forcing users to leave their chosen modality and return later, and these are where multimodal experiences break.

I had to figure out a way to make this system work.

THE ARCHITECTURE

First, I had to understand what I was actually dealing with.

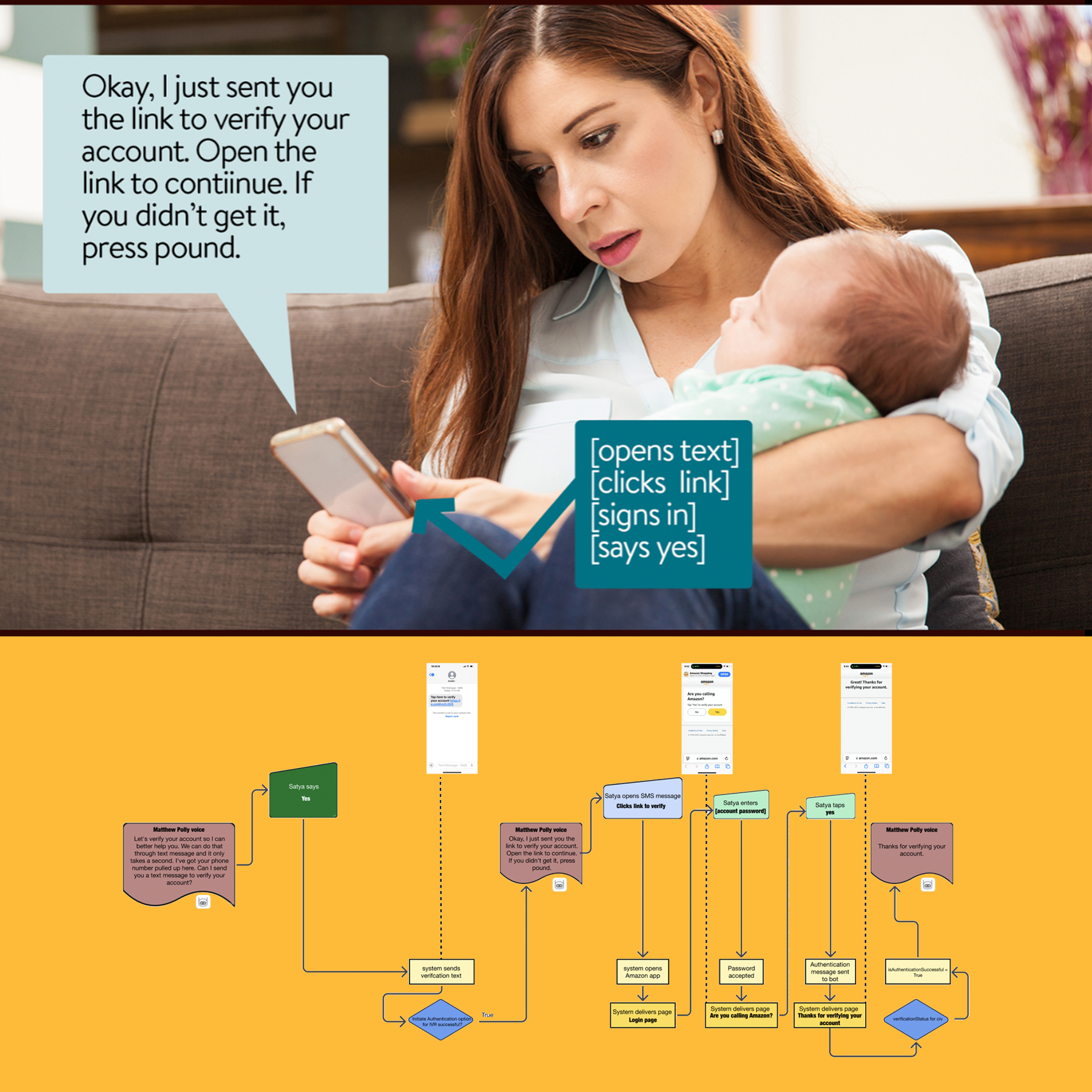

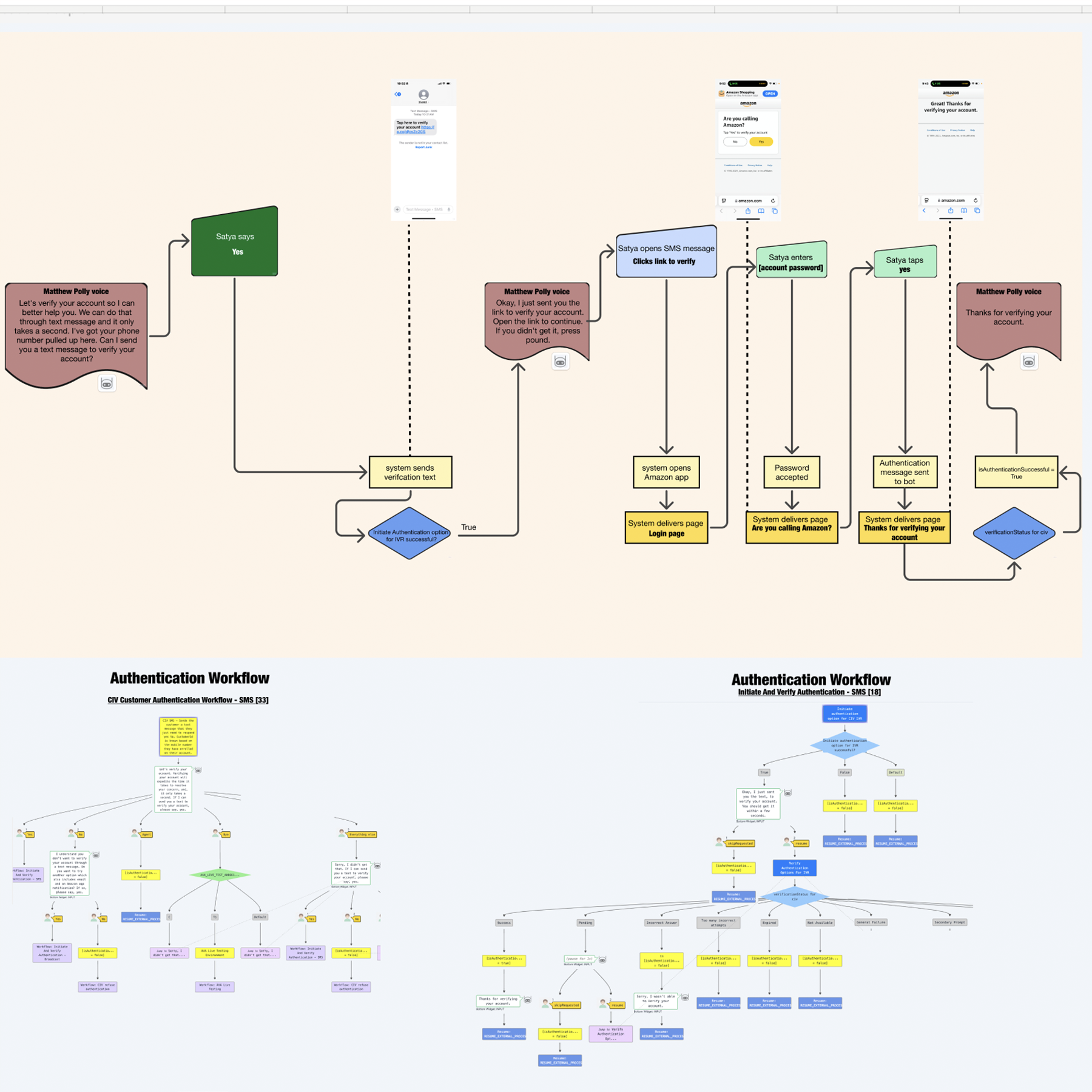

Authentication at Amazon Customer Service featured five distinct authentication types, each differentiated by how the system delivered the verification link — text, email, app notification, or combinations thereof. Before I could propose any changes, I needed to map exactly what was happening at the system level and where users were falling off.

I built three-layer flow maps of the existing experience: the top layer showed the customer journey: what they saw, heard, and were asked to do. The middle layer showed the system responses with the under-the-hood processes happening below in parallel. The bottom layer showed the two named workflows handling authentication and intent detection. Once I understood which workflows were being triggered under which conditions, I worked with analytics to get real metrics on abandonment at each step.

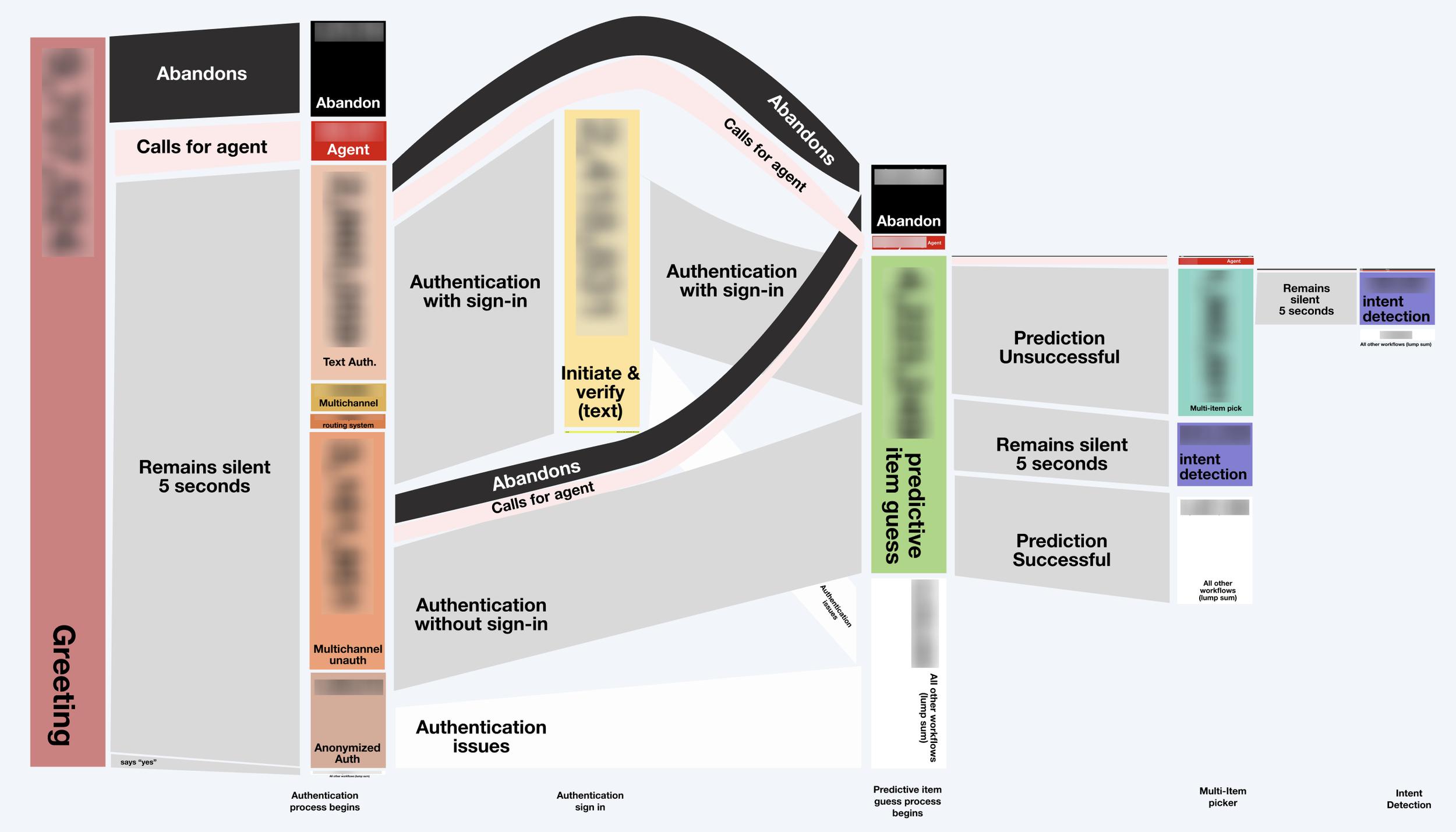

Then I built a Sankey chart to visualize the flow — where customers dropped off, which steps created the most friction, which authentication paths had the highest abandonment. This gave leadership the first clear data picture of where design changes could actually make a measurable difference. It showed that authentication was a problem, but it was part of a larger architectural problem.

THE CONSTRAINT

Amazon was the only voice experience doing it this way.

As a multimodal designer, I knew that you want to keep the user in the modality they purposefully selected (which in this case was voice). But although voice is a very accessible modality, confirming security details aloud in a voice modality is inherently insecure. You can't just ask customers questions like 'What's your password?' The involuntary modal transition—switching from voice to text or email—was the safest method available. But designing around those modal transitions is brutal. There are so many ways the experience breaks when you're asking someone to leave voice, text or email something, and come back.

I also analyzed benchmark testing across other major voice experiences to understand how they handled authentication. The finding was stark: Amazon was the only one that authenticated before asking the reason for calling. Every other voice experience asked "Why are you calling?" first, then authenticated.

Researching this delay in getting the customer’s reason for calling also led me to the work of John Heritage and the field of Conversation Analysis, specifically the principle that institutional voice interactions need to establish the reason for the interaction as early as possible to feel natural and purposeful. It gave me academic backing for what the data was already showing, and I worked out a plan to incorporate authentication later in the flow, but that would require months of iterative steps before leadership felt the system was secure enough to handle unidentified user requests.

So the obstacle was: how do we keep authentication early (for security reasons), reduce the friction it creates (for UX reasons), and do it through a channel transition that's inherently fragile?

If we had to keep authentication early, the way we asked for it had to be persuasive.

THE APPROACH

It couldn't feel like a barrier. It had to feel like protection.

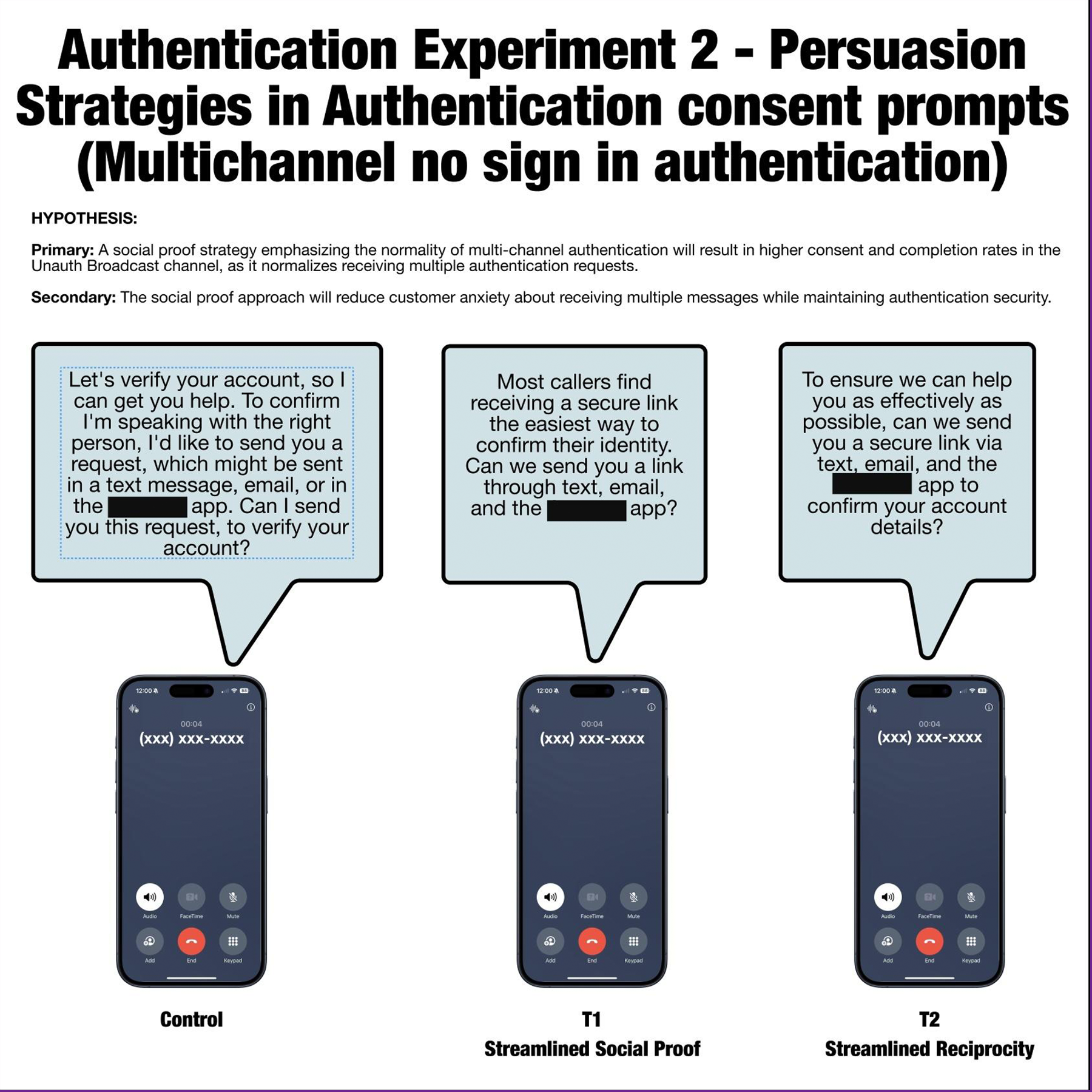

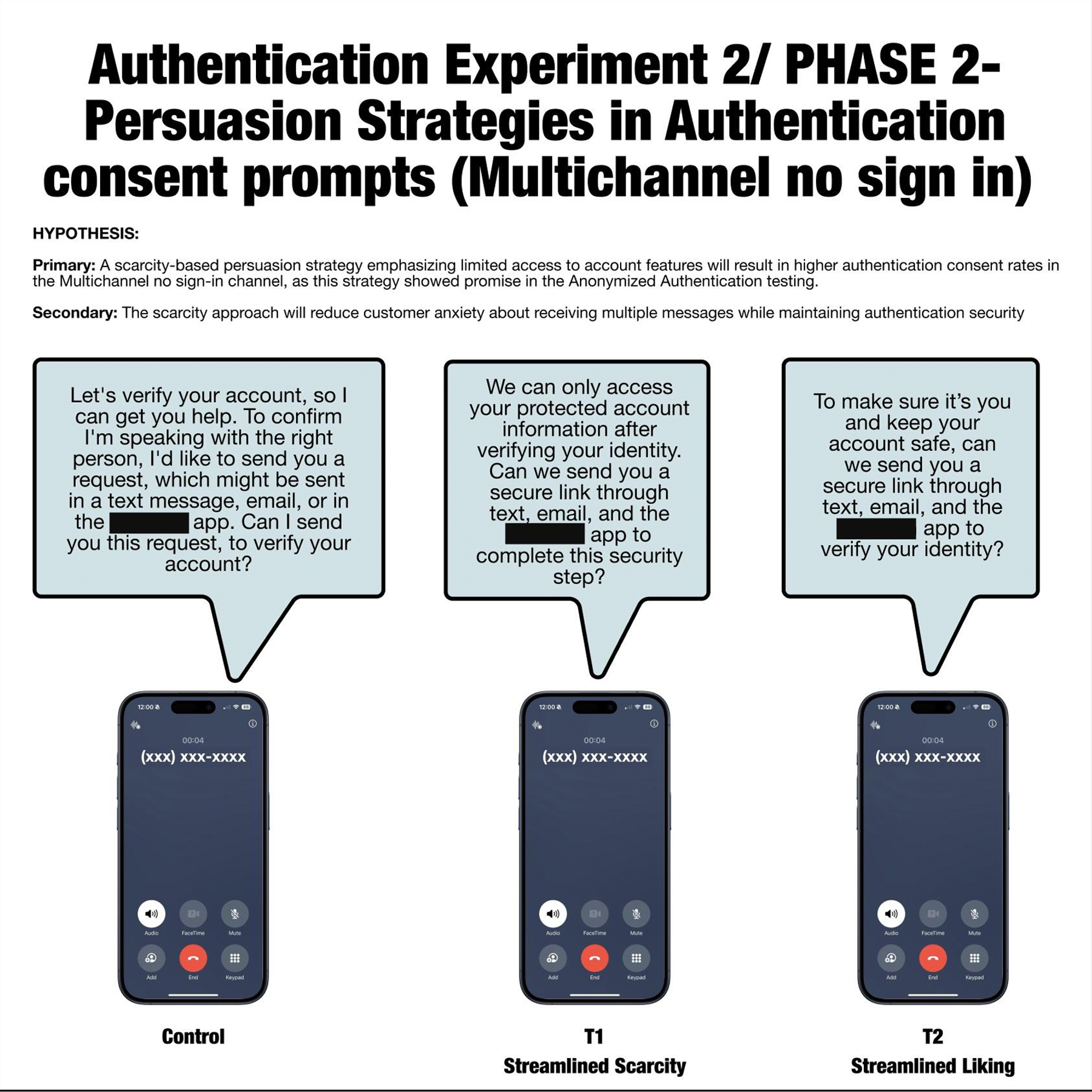

I turned to Robert Cialdini's six principles of behavioral persuasion: Scarcity, Reciprocity, Social Proof, Authority, Liking, and Consistency. Each principle offers a different psychological frame for the same ask. I designed a multi-variant A/B testing framework — running experiments across authentication types simultaneously in multiple global markets. The hypothesis was simple: different persuasion strategies would reduce friction and raise the likeliness the user would agree to authenticate. What I didn't know going in was whether the same strategy would work everywhere, or if the same strategies would apply to all forms of authentication. But by carefully coordinating the variables we were testing for, I ensured we could get a broader view of how the persuasion strategies were working.

THE FINDINGS

One size does not fit all markets.

When the weblab results came back, the data told a more complex story than I expected — and a more interesting one.

In the US, the Social Proof strategy ("Most callers find this quick and easy") actually increased drop-off and agent escalation.

In the UK and India, Scarcity ("We can only access your protected account information after verifying your identity") improved acceptance rates.

In Germany and Canada, Social Proof outperformed every other strategy.

This meant I had to go back to Product and Engineering with a finding that made their lives harder: we couldn't pick one winning strategy and scale it globally. We needed to localize by market. That's a significant technical lift — it requires the system to support market-specific copy variations rather than a single global prompt. But the data justified it, and I made that case directly to stakeholders.

We implemented scarcity testing for the US market and continued experimenting with other strategies internationally. The framework I built was designed to be reusable — future teams could keep testing new persuasion strategies without rebuilding the testing architecture from scratch.

THE RESULTS

Small percentages. Enormous scale.

My changes were still being implemented when I left Amazon, but the early numbers were already significant:

~3% improvement in authentication acceptance rates

~6% reduction in negative customer sentiment

Hundreds of thousands of customer interactions affected annually

Reduced human-agent escalation across multiple markets

At Amazon's call volume, a 3% authentication acceptance rate improvement isn't a rounding error. It's a meaningful reduction in agent load, customer frustration, and call abandonment — every single day, across multiple continents.

The bigger finding was the one I didn't anticipate: market context directly shapes how customers interpret persuasion. What reads as reassuring in Germany reads as pressure in the US. Designing for that (not homogenizing globally) is how you actually get results at scale.

What this project taught me about design at scale

This project taught me that voice design, and increasingly LLM-powered design, isn't just about what users do. It's about how they interpret framing. The words you choose aren't decoration, they're the mechanism. Change the frame, change the behavior.

It also taught me something about organizational constraints. The "right" answer (moving authentication later in the flow) was architecturally sound but organizationally impossible in the short term. A designer who can only operate in ideal conditions isn't useful at a company like Amazon. You have to be able to find the lever that's actually available to you, and pull it hard enough to matter.

The persuasion framework I built didn't solve the two-minute problem. But it chipped away at the hardest part of it — and left behind a system that could keep chipping.